In today’s digital age, businesses increasingly rely on software to deliver services, engage customers, streamline operations, and drive innovations. How software is deployed is now a make-or-break factor for thriving in the digital realm. Efficient deployment methods ensure competitiveness, adaptability, and responsiveness to market changes.

Software deployment encompasses the process of taking a piece of code, often the result of countless hours of development, and making it operational within an environment where end-users can access and interact with it. This crucial step acts as the bridge between creative concepts and practical utilities where lines of code become tools, platforms, and solutions that empower both organizations and individuals.

In today’s blog, we will explore the evolution of deployment methods, from manual setups on physical machines to modern virtualized environments and cloud-native containerization. We’ll see how these advancements improved reliability, availability, scalability, and operational efficiency, ultimately reducing costs, and enhancing customer satisfaction.

Rise of the (Virtual) Machines

In the early days of computing, software deployment was entirely manual. Engineers had to painstakingly adapt the installation process to specific operating systems (OS) and often wrestled with system drivers to ensure compatibility. Furthermore, with every update of the OS, there was a significant risk that the deployment would crash due to incompatibility issues. The entire process was resource-intensive, prone to human error, and lacked standardization. Reliability was a constant challenge, scalability was limited by hardware constraints, and downtime was common.

Virtual Machines (VMs)

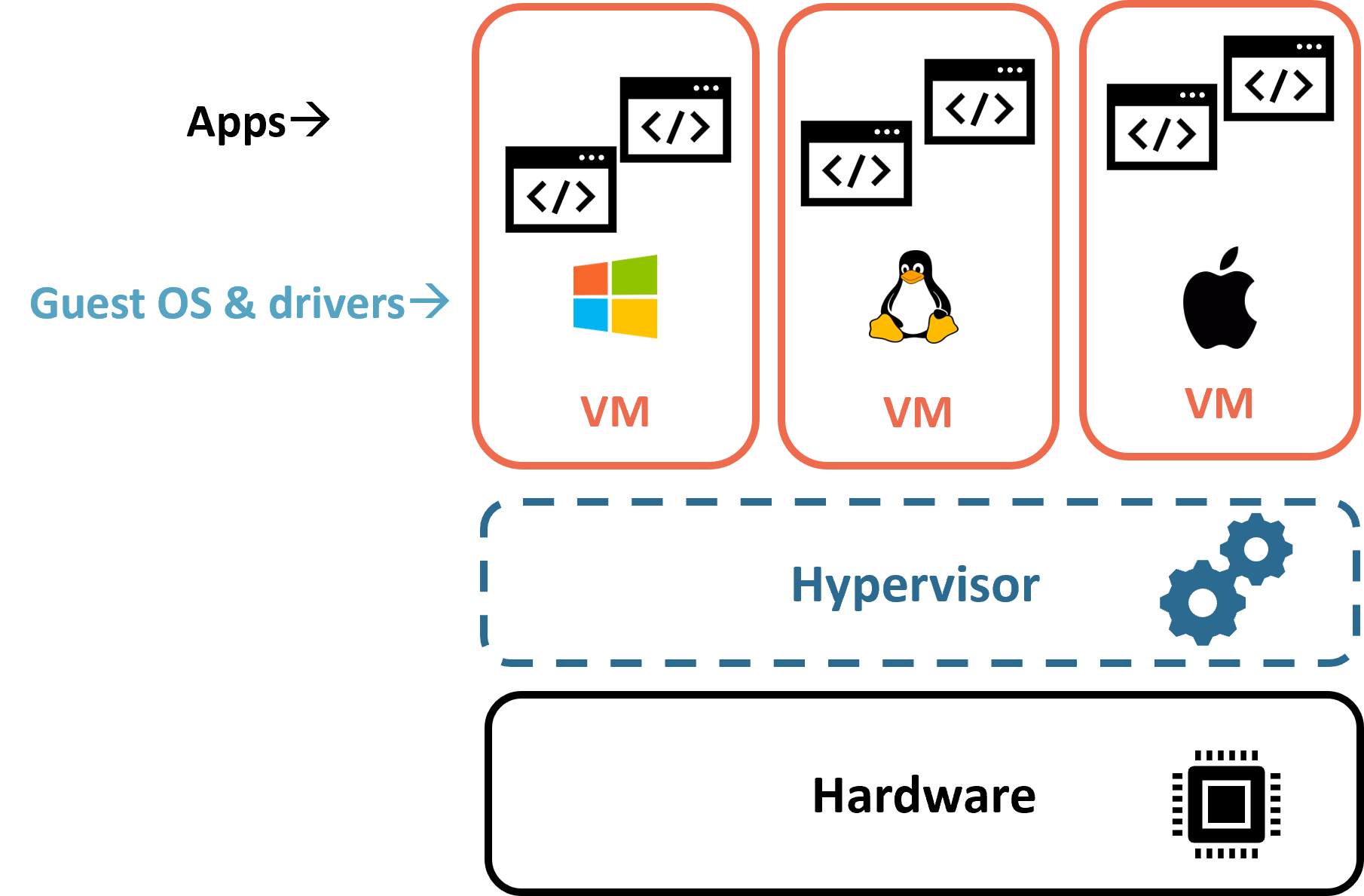

Enter virtualization. The emergence of virtualization technologies, exemplified by platforms like VMWare, Virtual Box, Xen, and Hyper-V, marked a significant turning point in the world of software deployment, ushering in a new era of virtualization. These technologies introduced the concept of virtual machines (VMs) as a game-changer for deployment strategies. VMs function as software-based replicas of physical machines, allowing multiple operating systems and applications to run independently on a single physical server. This innovation drastically simplified the deployment process by encapsulating an entire software environment within a virtual container.

Virtual machines marked a substantial leap forward, fundamentally reshaping deployment processes. Engineers no longer wrestled with configuring specific hardware; they operated in standardized virtual environments, ensuring consistency for software deployment. This flexibility allowed applications to be packaged as VMs, enhancing portability across infrastructures.

Containers

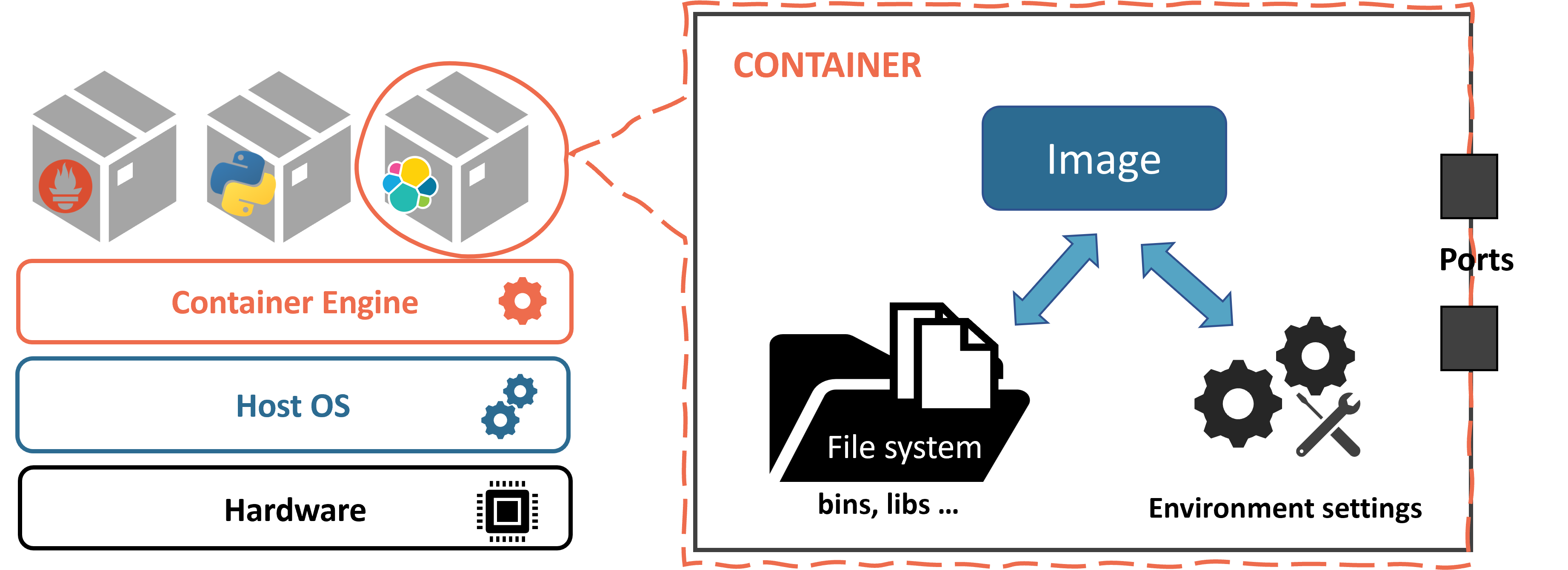

The evolution of virtualization continued with a significant leap in abstraction through the introduction of containers, a lighter-weight form of virtualization. Instead of abstracting the entire infrastructure, containers abstract just a part of the operating system, creating isolated environments with their own file system, binaries, libraries, and configuration files, but using the kernel of the host operating system. Containers provide a self-contained environment in which applications run with their own source code, dependencies, and virtualized OS resources, all isolated from other containers and the host OS.

Unlike conventional virtual machines (VMs), containers share the host OS kernel, maximizing efficiency and resource utilization. They offer a standardized and predictable deployment environment, simplifying software packaging and reproducibility across various settings. Containers demand less memory, start quickly, and enhance system stability and security by isolating processes within their confines.

Docker, one of the pioneering containerization platforms, led the way in popularizing this technology. However, the containerization landscape is dynamic, and new platforms and technologies emerge every day. While Docker is still widely used today, other popular platforms are containerd, Podman, LXD, RunC, etc.

While conventional VMs offered many benefits in terms of simplifying deployment, optimizing resource utilization, and enhancing security and stability, the advent of containers completely changed the way we approach deployment. Containers revolutionized the deployment pipeline by enabling the consistent building, testing, and deployment of applications across diverse environments. The inherent portability of containers ensures that applications run reliably on various infrastructure platforms, whether in on-premises data centers or in the cloud. This consistency and efficiency dramatically reduce deployment friction, enabling engineers to focus on writing code and ensuring applications work as intended.

Reaching for the Clouds

Cloud computing, specifically Infrastructure as a Service (IaaS), has revolutionized how businesses manage computing resources, shifting away from traditional on-premises infrastructure. IaaS offers virtualized resources, like servers and storage, provided by global cloud leaders like AWS, Azure, and Google Cloud. This virtualization eliminates physical hardware ownership, allowing organizations to focus on software development and deployment. Importantly, it marks a departure from managing individual servers to orchestrating cloud resources collectively, which in turn reduces costs, and enhances flexibility.

Containers and the cloud go hand in hand, forming a symbiotic relationship that amplifies their benefits. Leading cloud providers like AWS, Azure, and Google Cloud embrace container technology, offering services like Amazon ECS, Azure Kubernetes Service (AKS), and Google Kubernetes Engine (GKE) for simplified container orchestration and scaling. This blend of containers and the cloud harnesses cloud scalability and reliability while benefiting from container efficiency and portability. Scalability adapts to precise needs, while redundancy and failover mechanisms ensure high availability and reliability. This empowers engineers to focus on software innovation over infrastructure management. The result is rapid and efficient application development, ushering in an era of limitless cloud-native innovation.

Cloud-Native Development

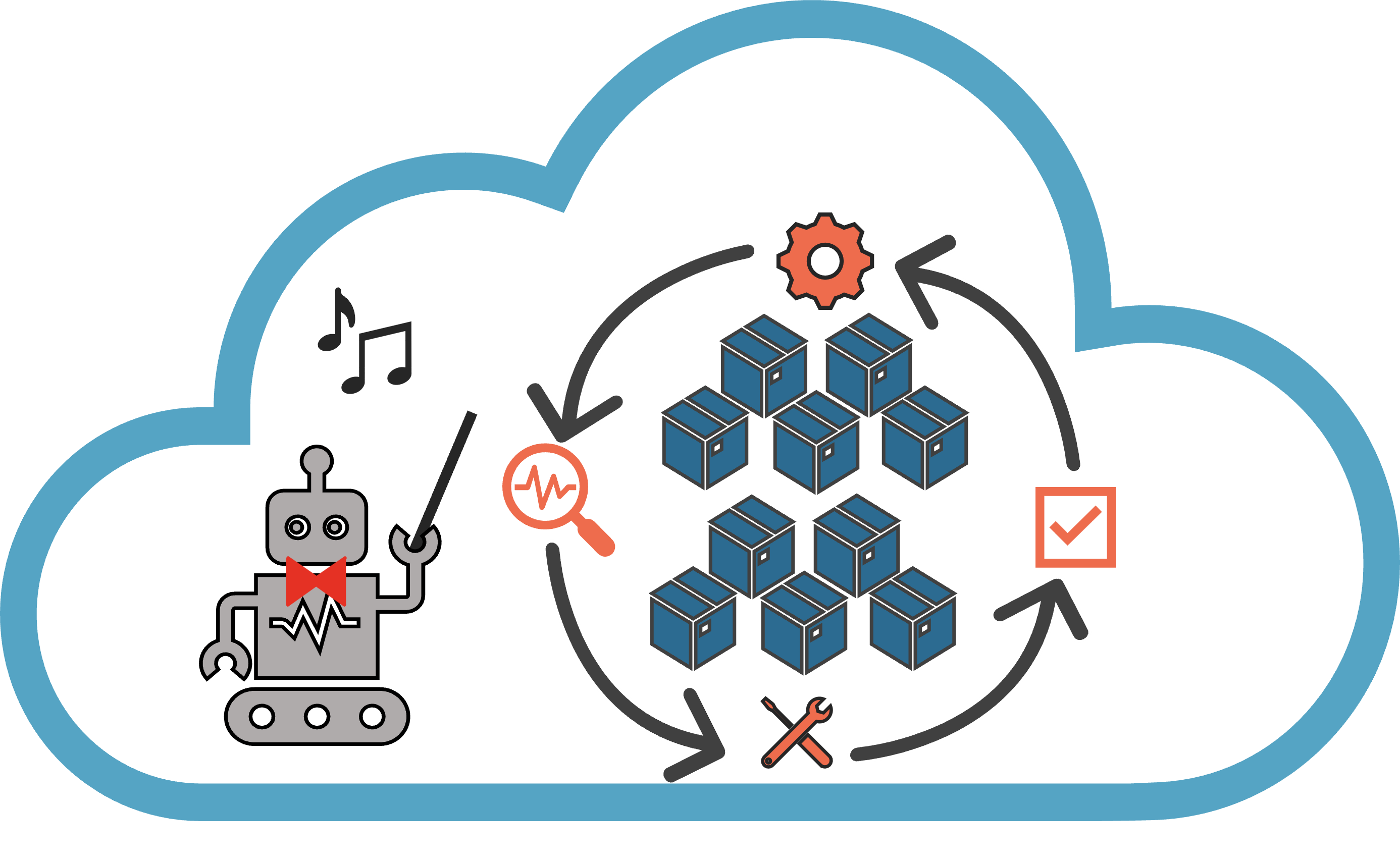

Cloud-native application development represents a paradigm shift in software engineering, optimizing applications for the dynamic and scalable cloud environment. These apps are agile, scalable, and resilient, often packaged in containers and managed efficiently through container orchestration.

One of the key enablers of cloud-native applications is container orchestration. Platforms like Kubernetes have become the de facto standard for orchestrating containers at scale. They automate tasks such as deployment, scaling, load balancing, and self-healing, making it possible to effortlessly scale applications up or down based on demand. Auto-scaling ensures that applications can handle traffic spikes without manual intervention, while high availability is achieved through automated failover mechanisms. Container orchestration platforms provide a robust and resilient foundation for cloud-native applications, ensuring responsiveness and availability, even amid hardware failures or other disruptions.

Cloud-native applications often adopt a microservices architecture, decomposing complex applications into small, independently deployable services. Each microservice focuses on a specific function or feature, communicating with others via APIs, fostering agility, independent development, and easy updates, as different teams can develop and deploy microservices independently without affecting the entire application. Containerization and orchestration enable precise scaling and resource control, facilitating rapid adaptation to market changes and feature delivery.

Serverless computing

Serverless computing is another key part of cloud-native application development. In this model, developers write code as functions that execute in response to specific events or triggers. Serverless platforms, like AWS Lambda and Azure Functions, automatically manage the underlying infrastructure, abstracting away server provisioning and maintenance tasks. This results in reduced operational overhead and cost savings, as organizations only pay for the actual compute resources used during function execution.

Serverless computing complements containerized microservices, allowing developers to choose the right computing model for each component of their cloud-native applications. It accelerates development cycles, fosters innovation, and minimizes the need for infrastructure management, making it a valuable asset in the cloud-native toolkit.

Conclusion

In conclusion, deployment methods have undergone a significant evolution, shaping the landscape of modern technology. From the manual installation on physical machines to the era of virtualization, containers, and the cloud, each step has brought about remarkable changes. This transformation has reduced complexity for engineers, increased reliability, scalability, and availability of services, and made businesses more agile in responding to market demands.

Looking forward, the future of software deployment holds exciting possibilities. Emerging technologies like edge computing, serverless computing, and AI-driven advanced orchestration systems promise to further optimize deployment processes. Containers, in particular, provide a solid foundation for higher levels of operations automation and the adoption of Continuous Integration and Continuous Deployment (CI/CD) practices in cloud-native application development. As the digital world continues to evolve, innovation in deployment methods is set to redefine how businesses operate, offering them more agility, efficiency, and competitiveness in the ever-changing market.